What are GBM models

A Gradient Boosting Machine or GBM combines the predictions from multiple decision trees to generate the final predictions. … So, every successive decision tree is built on the errors of the previous trees. This is how the trees in a gradient boosting machine algorithm are built sequentially.

What is GBM classifier?

Gradient boosting refers to a class of ensemble machine learning algorithms that can be used for classification or regression predictive modeling problems. Gradient boosting is also known as gradient tree boosting, stochastic gradient boosting (an extension), and gradient boosting machines, or GBM for short.

What is a boosted tree model?

Boosted Regression Tree (BRT) models are a combination of two techniques: decision tree algorithms and boosting methods. Like Random Forest models, BRTs repeatedly fit many decision trees to improve the accuracy of the model.

How does a gradient boosting model work?

Gradient boosting is a type of machine learning boosting. It relies on the intuition that the best possible next model, when combined with previous models, minimizes the overall prediction error. … If a small change in the prediction for a case causes no change in error, then next target outcome of the case is zero.Can GBM be used for regression?

This example demonstrates Gradient Boosting to produce a predictive model from an ensemble of weak predictive models. Gradient boosting can be used for regression and classification problems.

Is CatBoost better than LightGBM?

CatBoost vs LightGBM This time, we build CatBoost and LightGBM regression models on the California house pricing dataset. LightGBM has slightly outperformed CatBoost and it is about 2 times faster than CatBoost!

Who uses CatBoost?

CatBoost is an algorithm for gradient boosting on decision trees. It is developed by Yandex researchers and engineers, and is used for search, recommendation systems, personal assistant, self-driving cars, weather prediction and many other tasks at Yandex and in other companies, including CERN, Cloudflare, Careem taxi.

How can I improve my glioblastoma performance?

- Choose a relatively high learning rate. …

- Determine the optimum number of trees for this learning rate. …

- Tune tree-specific parameters for decided learning rate and number of trees. …

- Lower the learning rate and increase the estimators proportionally to get more robust models.

How does GBM algorithm work?

The gradient boosting algorithm (gbm) can be most easily explained by first introducing the AdaBoost Algorithm. The AdaBoost Algorithm begins by training a decision tree in which each observation is assigned an equal weight. … Gradient Boosting trains many models in a gradual, additive and sequential manner.

What problems is gradient boosting good for?4)Applications: i) Gradient Boosting Algorithm is generally used when we want to decrease the Bias error. ii) Gradient Boosting Algorithm can be used in regression as well as classification problems. In regression problems, the cost function is MSE whereas, in classification problems, the cost function is Log-Loss.

Article first time published onIs Random Forest bagging or boosting?

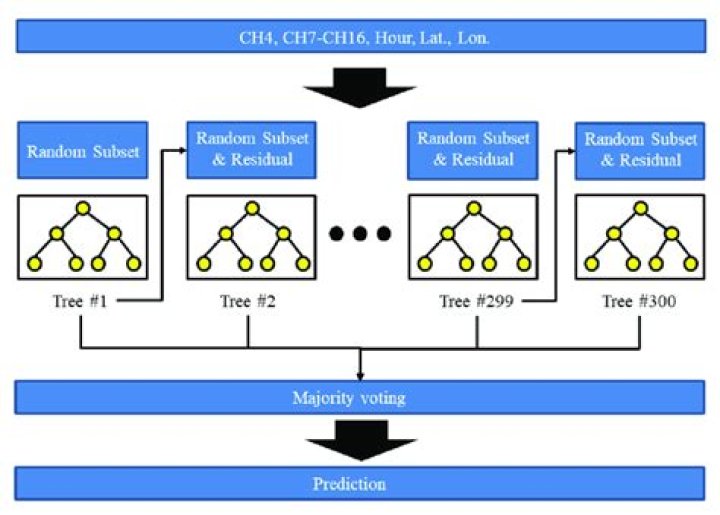

The random forest algorithm is actually a bagging algorithm: also here, we draw random bootstrap samples from your training set. However, in addition to the bootstrap samples, we also draw random subsets of features for training the individual trees; in bagging, we provide each tree with the full set of features.

Does XGBoost use cart?

XGBoost uses Classification and Regression Trees (CART), which are presented in the above examples, to predict the outcome variable.

What is the XGBoost model?

XGBoost is a decision-tree-based ensemble Machine Learning algorithm that uses a gradient boosting framework. … The algorithm differentiates itself in the following ways: A wide range of applications: Can be used to solve regression, classification, ranking, and user-defined prediction problems.

Is AdaBoost gradient boosting?

AdaBoost is the first designed boosting algorithm with a particular loss function. On the other hand, Gradient Boosting is a generic algorithm that assists in searching the approximate solutions to the additive modelling problem. This makes Gradient Boosting more flexible than AdaBoost.

Is XGBoost in Scikit learn?

XGBoost is easy to implement in scikit-learn. XGBoost is an ensemble, so it scores better than individual models. XGBoost is regularized, so default models often don’t overfit. … XGBoost learns form its mistakes (gradient boosting).

Is gradient boosting supervised or unsupervised?

Gradient boosting (derived from the term gradient boosting machines) is a popular supervised machine learning technique for regression and classification problems that aggregates an ensemble of weak individual models to obtain a more accurate final model.

Why you should learn CatBoost now?

Not only does it build one of the most accurate model on whatever dataset you feed it with — requiring minimal data prep — CatBoost also gives by far the best open source interpretation tools available today AND a way to productionize your model fast.

What's so special about CatBoost?

CatBoost is the only boosting algorithm with very less prediction time. Thanks to its symmetric tree structure. It is comparatively 8x faster than XGBoost while predicting.

How does a CatBoost classifier work?

CatBoost is a recently open-sourced machine learning algorithm from Yandex. … It yields state-of-the-art results without extensive data training typically required by other machine learning methods, and. Provides powerful out-of-the-box support for the more descriptive data formats that accompany many business problems.

Can LightGBM handle categorical features?

Similar to CatBoost, LightGBM can also handle categorical features by taking the input of feature names. It does not convert to one-hot coding, and is much faster than one-hot coding. LGBM uses a special algorithm to find the split value of categorical features [Link].

Which boosting algorithm is best?

1. Gradient Boosting. In the gradient boosting algorithm, we train multiple models sequentially, and for each new model, the model gradually minimizes the loss function using the Gradient Descent method.

Is GBM better than random forest?

If you carefully tune parameters, gradient boosting can result in better performance than random forests. However, gradient boosting may not be a good choice if you have a lot of noise, as it can result in overfitting. They also tend to be harder to tune than random forests.

What is gradient boosting Regressor?

Gradient Boosting for regression. GB builds an additive model in a forward stage-wise fashion; it allows for the optimization of arbitrary differentiable loss functions. In each stage a regression tree is fit on the negative gradient of the given loss function.

What is statistical boost?

In predictive modeling, boosting is an iterative ensemble method that starts out by applying a classification algorithm and generating classifications. The idea is to concentrate the iterative learning process on the hard-to-classify cases. …

What is the type of SVM learning?

“Support Vector Machine” (SVM) is a supervised machine learning algorithm that can be used for both classification or regression challenges. However, it is mostly used in classification problems. … The SVM classifier is a frontier that best segregates the two classes (hyper-plane/ line).

What is GBM model in R?

gbm. The gbm R package is an implementation of extensions to Freund and Schapire’s AdaBoost algorithm and Friedman’s gradient boosting machine. This is the original R implementation of GBM.

What is interaction depth in GBM?

interaction. depth = 1 : additive model, interaction. depth = 2 : two-way interactions, etc. As each split increases the total number of nodes by 3 and number of terminal nodes by 2, the total number of nodes in the tree will be 3∗N+1 and the number of terminal nodes 2∗N+1.

What is a gradient boosted decision tree?

Gradient boosting is a machine learning technique used in regression and classification tasks, among others. When a decision tree is the weak learner, the resulting algorithm is called gradient-boosted trees; it usually outperforms random forest. …

What is Nodesize in random forest?

The nodesize parameter specifies the minimum number of observations in a terminal node. Setting it lower leads to trees with a larger depth which means that more splits are performed until the terminal nodes. In several standard software packages the default value is 1 for classification and 5 for regression.

Does bagging eliminate Overfitting?

Bagging attempts to reduce the chance of overfitting complex models. It trains a large number of “strong” learners in parallel. A strong learner is a model that’s relatively unconstrained. Bagging then combines all the strong learners together in order to “smooth out” their predictions.

What is bootstrap random forest?

Random Forest is one of the most popular and most powerful machine learning algorithms. It is a type of ensemble machine learning algorithm called Bootstrap Aggregation or bagging. … The Random Forest algorithm that makes a small tweak to Bagging and results in a very powerful classifier.